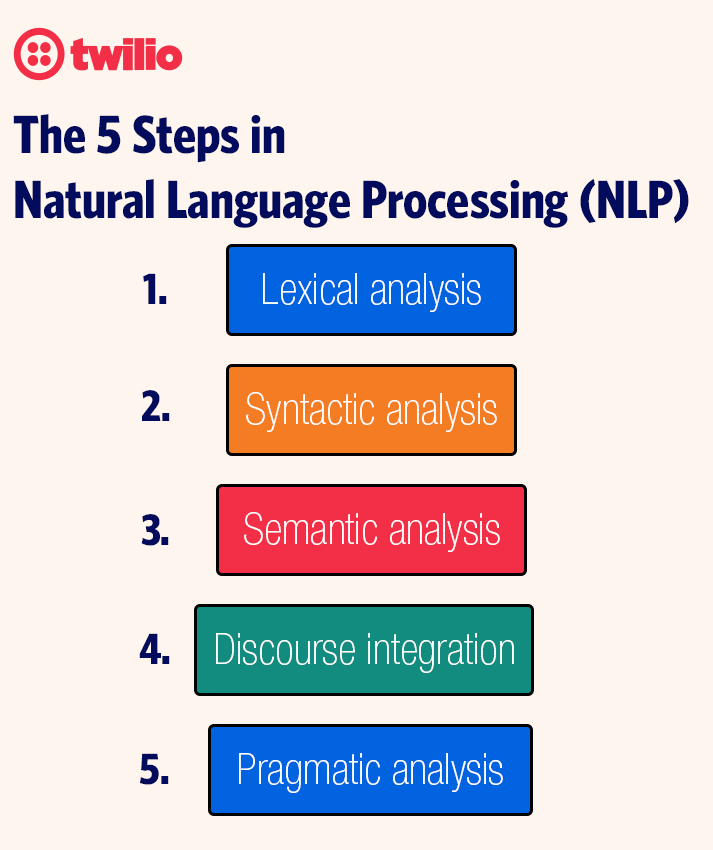

The 5 Steps in Natural Language Processing (NLP)

Time to read:

The world is more digitally connected than ever. Information, insights, and data constantly vie for our attention, and it’s impossible to process it all. The challenge for your business is to know what customers and prospects say about your products and services, but time and limited resources prevent this from happening effectively.

That’s where natural language processing (NLP) steps come in. NLP is a subfield of linguistics, computer science, and artificial intelligence that uses 5 NLP processing steps to gain insights from large volumes of text—without needing to process it all. This article discusses the 5 basic NLP steps algorithms follow to understand language and how NLP business applications can improve customer interactions in your organization.

What is NLP?

Natural language processing consists of 5 steps machines follow to analyze, categorize, and understand spoken and written language. The 5 steps of NLP rely on deep neural network-style machine learning to mimic the brain’s capacity to learn and process data correctly.

Businesses use tools and algorithms that follow the 5 NLP stages to gather insights from large data sets and make informed business decisions. Some NLP business applications include:

- Text to speech: Converting text-to-speech data, then reproducing the text as natural-sounding speech

- Chatbots: Helping chatbots understand and respond to customer inquiries

- Urgency detection: Analyzing language to prioritize tasks

- Natural language understanding: Converting speech to text and analyzing its intent

- Autocorrect: Detecting and removing text errors and suggesting alternatives

- Sentiment analysis: Revealing the perceptions people have of your goods and services and those of your competitors

- Speech recognition: Powering applications that understand users’ voices and deciphering their meaning

NLP steps

The best NLP solutions follow 5 NLP processing steps to analyze written and spoken language. Understand these NLP steps to use NLP in your text and voice applications effectively.

1. Lexical analysis

Lexicon describes the understandable vocabulary that makes up a language. Lexical analysis deciphers and segments language into units—or lexemes—like paragraphs, sentences, phrases, and words. NLP algorithms categorize words into parts of speech (POS) and split lexemes into morphemes—meaningful language units that you can’t further divide. There are 2 types of morphemes:

- Free morphemes function independently as words (like “cow” and “house”).

- Bound morphemes make up larger words. The word “unimaginable” contains the morphemes “un-” (a bound morpheme signifying a negative context), “imagine” (the free morpheme root of the whole word), and “-able” (a bound morpheme denoting the root morpheme’s ability to end).

For instance, when performing a lexical analysis on the previous paragraph, the analysis isolates and divides the first sentence into lexeme phrases, like “the understandable vocabulary that makes up a language.” This analysis further divides the phrase into word lexemes, like “vocabulary” and “language,” categorizing both as noun POS. Then, the analysis derives free morphemes, like “words,” “vocabulary,” and “understand-,” and bound morphemes, like “-able.”

2. Syntactic analysis

Syntax describes how a language’s words and phrases arrange to form sentences. Syntactic analysis checks word arrangements for proper grammar.

For instance, the sentence “Dave wrote the paper” passes a syntactic analysis check because it’s grammatically correct. Conversely, a syntactic analysis categorizes a sentence like “Dave do jumps” as syntactically incorrect.

3. Semantic analysis

Semantics describe the meaning of words, phrases, sentences, and paragraphs. Semantic analysis attempts to understand the literal meaning of individual language selections, not syntactic correctness. However, a semantic analysis doesn’t check language data before and after a selection to clarify its meaning.

For instance, “Manhattan calls out to Dave” passes a syntactic analysis because it’s a grammatically correct sentence. However, it fails a semantic analysis. Because Manhattan is a place (and can’t literally call out to people), the sentence’s meaning doesn’t make sense.

4. Discourse integration

Discourse describes communication between 2 or more individuals. Discourse integration analyzes prior words and sentences to understand the meaning of ambiguous language.

For instance, if one sentence reads, “Manhattan speaks to all its people,” and the following sentence reads, “It calls out to Dave,” discourse integration checks the first sentence for context to understand that “It” in the latter sentence refers to Manhattan.

5. Pragmatic analysis

Pragmatism describes the interpretation of language’s intended meaning. Pragmatic analysis attempts to derive the intended—not literal—meaning of language.

For instance, a pragmatic analysis can uncover the intended meaning of “Manhattan speaks to all its people.” Methods like neural networks assess the context to understand that the sentence isn’t literal, and most people won’t interpret it as such. A pragmatic analysis deduces that this sentence is a metaphor for how people emotionally connect with places.

Frequently asked questions about NLP

Q: What are the five main steps in NLP?

A: The five steps are lexical analysis, syntactic analysis, semantic analysis, discourse integration, and pragmatic analysis. Together, they move from breaking down words to understanding context and intent.

Q: How does NLP differ from natural language understanding (NLU)?

A: NLP is the broader field that covers analyzing and processing human language. NLU is a subset that focuses specifically on understanding meaning and intent within that language.

Q: What’s an NLP diagram used for?

A: An NLP diagram visually represents how data flows through each stage of processing. It’s a handy way to explain complex language algorithms to both technical and non-technical audiences.

Q: Why is pragmatic analysis important in NLP?

A: Pragmatic analysis looks at intended meaning, not just literal meaning. This is key for detecting metaphors, sarcasm, or implied intent—essential for building chatbots or voice assistants that feel more human.

Q: Can businesses use NLP without advanced AI teams?

A: Yes. Many APIs, including Twilio’s Programmable Voice, come with NLP features built in, so developers can add sentiment detection, speech recognition, or text-to-speech without creating custom models from scratch.

Discover Twilio’s Programmable Voice API

With insights into how the 5 steps of NLP can intelligently categorize and understand verbal or written language, you can deploy text-to-speech technology across your voice services to customize and improve your customer interactions. But first, you need the capability to make high-quality, private connections through global carriers while securing customer and company data.

Twilio’s Programmable Voice API follows natural language processing steps to build compelling, scalable voice experiences for your customers. Try it for free to customize your speech-to-text solutions with add-on NLP-driven features, like interactive voice response and speech recognition, that streamline everyday tasks.

Related Posts

Related Resources

Twilio Docs

From APIs to SDKs to sample apps

API reference documentation, SDKs, helper libraries, quickstarts, and tutorials for your language and platform.

Resource Center

The latest ebooks, industry reports, and webinars

Learn from customer engagement experts to improve your own communication.

Ahoy

Twilio's developer community hub

Best practices, code samples, and inspiration to build communications and digital engagement experiences.