Asynchronous HTTP Requests in Python with HTTPX and asyncio

Time to read:

Asynchronous code has increasingly become a mainstay of Python development. With asyncio becoming part of the standard library and many third party packages providing features compatible with it, this paradigm is not going away anytime soon.

Let's walk through how to use the HTTPX library to take advantage of this for making asynchronous HTTP requests, which is one of the most common use cases for non-blocking code.

What is non-blocking code?

You may hear terms like "asynchronous", "non-blocking" or "concurrent" and be a little confused as to what they all mean. According to this much more detailed tutorial, two of the primary properties are:

- Asynchronous routines are able to “pause” while waiting on their ultimate result to let other routines run in the meantime.

- Asynchronous code, through the mechanism above, facilitates concurrent execution. To put it differently, asynchronous code gives the look and feel of concurrency.

So asynchronous code is code that can hang while waiting for a result, in order to let other code run in the meantime. It doesn't "block" other code from running so we can call it "non-blocking" code.

The asyncio library provides a variety of tools for Python developers to do this, and aiohttp provides an even more specific functionality for HTTP requests. HTTP requests are a classic example of something that is well-suited to asynchronicity because they involve waiting for a response from a server, during which time it would be convenient and efficient to have other code running.

Setting up

Make sure to have your Python environment setup before we get started. Follow this guide up through the virtualenv section if you need some help. Getting everything working correctly, especially with respect to virtual environments is important for isolating your dependencies if you have multiple projects running on the same machine. You will need at least Python 3.7 or higher in order to run the code in this post.

Now that your environment is set up, you’re going to need to install the HTTPX library for making requests, both asynchronous and synchronous which we will compare. Install this with the following command after activating your virtual environment:

With this you should be ready to move on and write some code.

Making an HTTP Request with HTTPX

Let's start off by making a single GET request using HTTPX, to demonstrate how the keywords async and await work. We're going to use the Pokemon API as an example, so let's start by trying to get the data associated with the legendary 151st Pokemon, Mew.

Run the following Python code, and you should see the name "mew" printed to the terminal:

In this code, we're creating a coroutine called main, which we are running with the asyncio event loop. Here, we are making a request to the Pokemon API and then awaiting a response.

This async keyword basically tells the Python interpreter that the coroutine we're defining should be run asynchronously with an event loop. The await keyword passes control back to the event loop, suspending the execution of the surrounding coroutine and letting the event loop run other things until the result that is being "awaited" is returned.

Making a large number of requests

Making a single asynchronous HTTP request is great because we can let the event loop work on other tasks instead of blocking the entire thread while waiting for a response. This functionality truly shines when trying to make a larger number of requests. Let's demonstrate this by performing the same request as before, but for all 150 of the original Pokemon.

Let's take the previous request code and put it in a loop, updating which Pokemon's data is being requested and using await for each request:

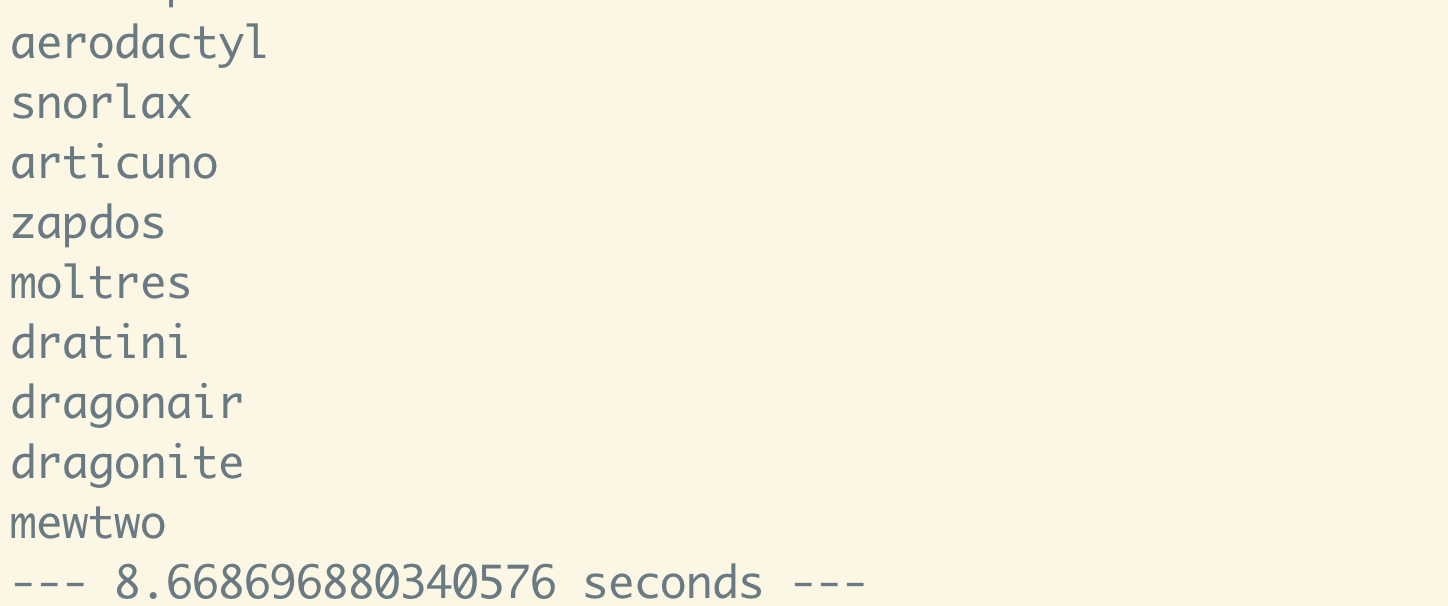

This time, we're also measuring how much time the whole process takes. If you run this code in your Python shell, you should see something like the following printed to your terminal:

8.6 seconds seems pretty good for 150 requests, but we don't really have anything to compare it to. Let's try accomplishing the same thing synchronously.

Comparing speed with synchronous requests

To print the first 150 Pokemon as before, but without async/await, run the following code:

You should see the same output with a different runtime:

Although it doesn't seem to be that much slower than before. This is most likely because the connection pooling done by the HTTPX Client is doing most of the heavy lifting. However, we can utilize more asyncio functionality to get better performance than this.

Utilizing asyncio for improved performance

There are more tools that asyncio provides which can greatly improve our performance overall. In the original example, we are using await after each individual HTTP request, which isn't quite ideal. We can instead run all of these requests "concurrently" as asyncio tasks and then check the results at the end using asyncio.ensure_future and asyncio.gather.

If the code that actually makes the request is broken out into its own coroutine function, we can create a list of tasks, consisting of futures for each request. We can then unpack this list to a gather call, which runs them all together. When we await this call to asyncio.gather, we will get an iterable for all of the futures that were passed in, maintaining their order in the list. This way we're only using await one time.

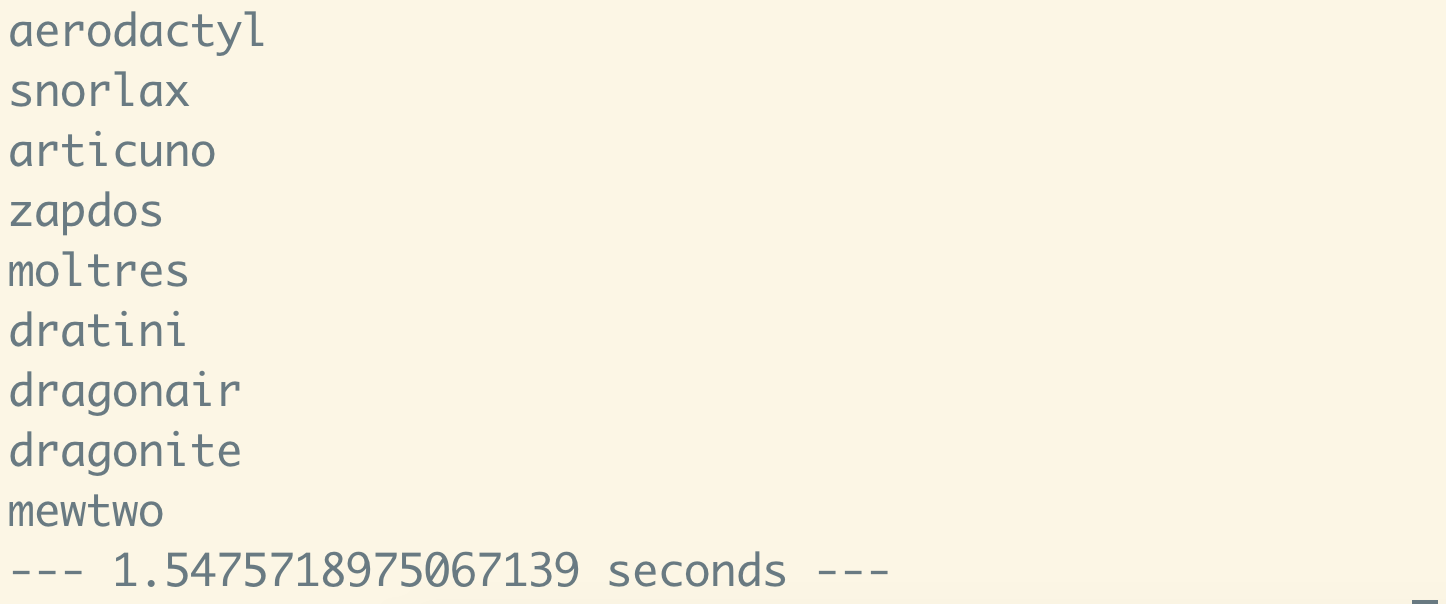

To see what happens when we implement this, run the following code:

This brings our time down to a mere 1.54 seconds for 150 HTTP requests! That is a vast improvement over the previous examples. This is completely non-blocking, so the total time to run all 150 requests is going to be roughly equal to the amount of time that the longest request took to run. The exact numbers will vary depending on your internet connection.

Concluding Thoughts

As you can see, using libraries like HTTPX to rethink the way you make HTTP requests can add a huge performance boost to your code and save a lot of time when making a large number of requests.

In this tutorial, we have only scratched the surface of what you can do with asyncio, but I hope that this has made starting your journey into the world of asynchronous Python a little easier. If you're interested in another similar library for making asynchronous HTTP requests, check out this other blog post I wrote about aiohttp.

I’m looking forward to seeing what you build. Feel free to reach out and share your experiences or ask any questions.

- Email: sagnew@twilio.com

- Twitter: @Sagnewshreds

- Github: Sagnew

- Twitch (streaming live code): Sagnewshreds

Related Posts

Related Resources

Twilio Docs

From APIs to SDKs to sample apps

API reference documentation, SDKs, helper libraries, quickstarts, and tutorials for your language and platform.

Resource Center

The latest ebooks, industry reports, and webinars

Learn from customer engagement experts to improve your own communication.

Ahoy

Twilio's developer community hub

Best practices, code samples, and inspiration to build communications and digital engagement experiences.