Why Your Company Should Care About Retrieval-Augmented Generation

Time to read:

Why Your Company Should Care About Retrieval-Augmented Generation

When working with large language models (LLMs), many companies are using an approach called retrieval-augmented generation (RAG) to help improve accuracy and reduce the risk of AI hallucinations. The approach is gaining traction. But what is RAG, and why should you care?

LLMs rely on pre-trained data, meaning they’re unaware of recent events. LLM training data is typically also publicly available, which means an LLM likely has no knowledge of your company’s proprietary or sensitive data. These limitations make LLMs useful for general reasoning and information, but hamstring their usefulness in many business contexts.

Enter RAG. With RAG, a system first retrieves and pre-processes content that’s relevant to a user’s prompt, and then passes this contextual data to the LLM along with the prompt. RAG’s ability to retrieve data you’ve collated in real time to supplement an LLM’s response creates remarkable possibilities for companies looking to innovate with LLM-based applications.

( For more details, check out our glossary page: What is Retrieval-Augmented Generation (RAG)? )

In this post, we’ll explore the real-world applications of RAG for businesses that want to level up their customer experience.

Why your company should care about RAG

Companies have high hopes for how AI will improve their customer interactions and operational efficiency. But their expectations are tempered when they realize that an LLM right out of the box just won’t cut it.

However, using today’s powerful LLMs with your customer data is a different story – using RAG with an LLM changes everything. By connecting your proprietary data with RAG or another LLM data-augmentation technique, you can unlock the ability to build world class personalized customer experiences, at scale.

We found many companies are already turning to AI in our 2024 State of Customer Engagement Report .

Proprietary data is now a possibility

Trained on publicly accessible data, an LLM would have no knowledge of your company’s Q4 revenue numbers in South America, or whether your SMS marketing campaign sees more conversions on Tuesdays or Saturdays. It also doesn’t know your customers’ wants, needs, and preferences – the data you’ve gathered while building a relationship with your customers or users.

However, with RAG, your company can integrate real-time, proprietary data into AI responses, enhancing accuracy and personalization. This approach does much more than ensure that AI interactions are relevant; it tailors an AI system to your company’s unique data assets. You still control your company’s data—it’s proprietary and not part of an LLM’s training—but with RAG, you can use that data strategically to push LLM integrations even further.

What about other data-augmentation techniques?

RAG is not the only data-augmentation technique out there. Let’s explore a few alternatives, and talk briefly about their pros and cons.

- Training your own LLM: Large enterprises with significant resources may opt to create and train a language model completely from scratch, incorporating their own proprietary data into the training data set. This offers the advantage of complete customization and control over the LLM. However, it’s extremely resource-intensive, requiring significant time, computational power, and expertise – and introducing new data generally means training a new model.

- Fine-tuning an existing LLM: Another option some companies use for data-augmentation is to start with a pre-trained language model and fine-tune it with their proprietary data. This approach leverages an existing LLM, tailoring it to meet your specific needs. This saves a considerable amount of time and resources compared to training your own LLM. However, even fine-tuning an LLM can require substantial computational resources and expertise – and you need to carefully screen the data you use when fine-tuning.

- Basic prompt engineering: This is a more traditional technique, and it involves crafting prompts to include all relevant information within a single context window. This is a straightforward process and doesn’t require extensive computation resources. However, it can be limiting, as the context window for a prompt has a fixed size. Additionally, providing large prompts with every call to an LLM can become expensive. (Prompt engineering is a great first step towards enhancing LLM responses, though!)

In contrast with these alternative techniques, RAG dynamically retrieves and integrates external data. It provides contextual data that yields more comprehensive and accurate responses—without overloading the AI system.

However, RAG isn’t perfect – you won’t always have proprietary data responsive to the current query, and it doesn’t eliminate LLM hallucination. And providing context can still sometimes confuse current leading edge LLMs. But considering all of the pros and cons, we at Twilio really like the RAG approach. Marrying your company’s first-party data with the power of LLMs using RAG is accessible (and getting easier), and promises to greatly improve whatever AI-enhanced features and products you’re building.

Benefits for specific industries

If you’re still not convinced, let’s get more specific. RAG can offer significant benefits across various sectors. Here are some theoretical ways RAG can improve the experience across ecommerce, healthcare, and customer service:

In ecommerce, RAG can enhance the shopping experience by providing personalized recommendations and more detailed responses to product inquiries. This can lead to higher customer satisfaction and increased sales.

Skeptical? See how Trade Me , New Zealand’s largest online auction website, improved their open and click-through rates with better recommendations and audience predictions.

In healthcare, RAG can support patient interactions by accessing up-to-date medical data and patient history, improving patient outcomes and the accuracy of advice.

Twilio.org, our social impact arm, has thought through many of the implications of AI in medicine. See our webinar on the future of healthcare personalization for a survey of current trends and some healthcare predictions.

In customer service, RAG can handle complex queries with contextual accuracy. This would reduce the burden on human agents and improve response times, ultimately increasing customer satisfaction.

See a real-world example of AI conversational assistants easing the burden on human agents from our friends at PolyAI . They integrated their assistants with Twilio Flex , our cloud contact center, and our Voice API.

Real-World Use Cases of RAG

Because RAG brings the ability to integrate real-time data retrieval with the power of Generative AI, this opens up enormous potential for practical applications across industries – practical applications that we’re now also using here at Twilio.

By enhancing the contextual relevance and accuracy of AI responses, RAG can transform the way businesses leverage GenAI to service their customers. Let’s consider some real-world use cases where RAG brings substantial impact, and imagine other places it can make a difference.

Customer support

In the area of customer support (where we already discussed some of the tremendous benefits), RAG enhances efficiency by providing meaningful context to an LLM as it generates responses.

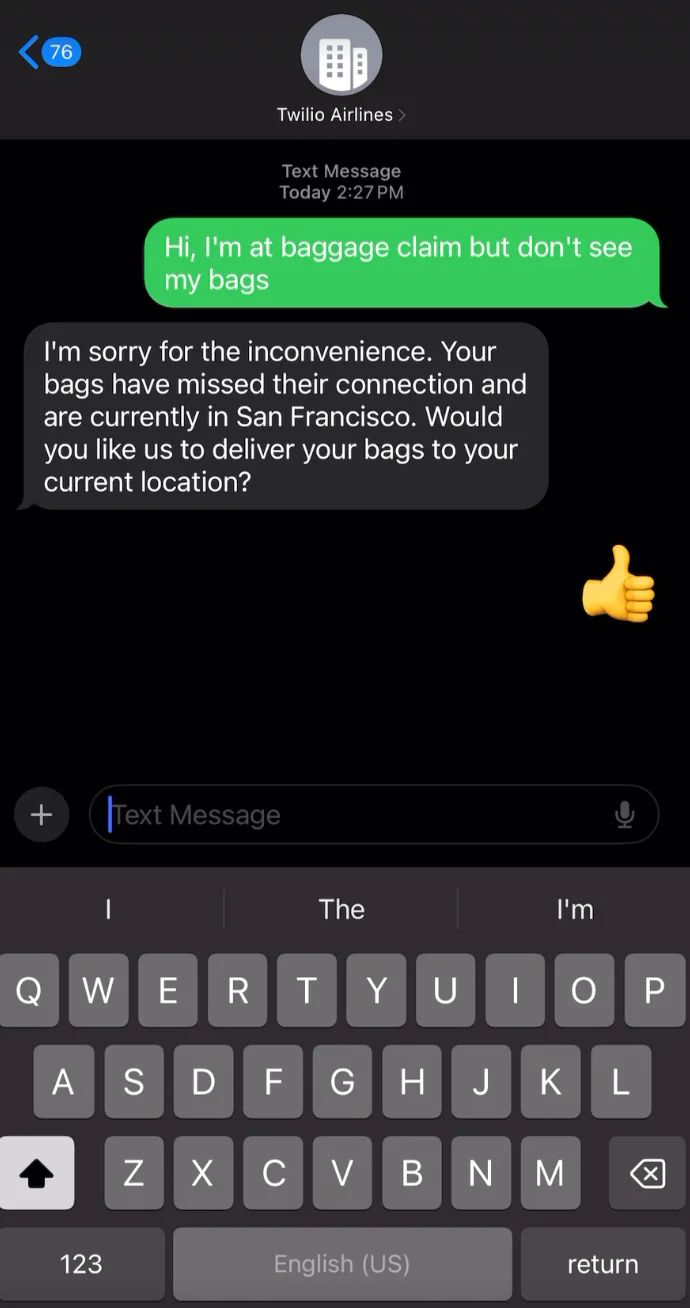

RAG can significantly enhance the self-service customer flow by guiding users to the most relevant information. Imagine a user trying to navigate through a company’s product offerings to find specific information. With RAG, an automated assistant can dynamically retrieve and present the most pertinent articles and solutions based on the user’s queries. It can also retrieve debugging information, support articles, and blog posts to better guide your users on their journey.

A user asks for guidance from an example AI Assistant helping with travel issues

This approach would not only provide users with fast and more accurate assistance, but it would also reduce the workload on human agents.

For more information on a customer use case and a real-world ready-to-try example, read about Twilio’s Help Center Assistant , a GenAI chatbot that can deliver precise and contextual support to users. (And, please try it out!)

Pre-sales assistance

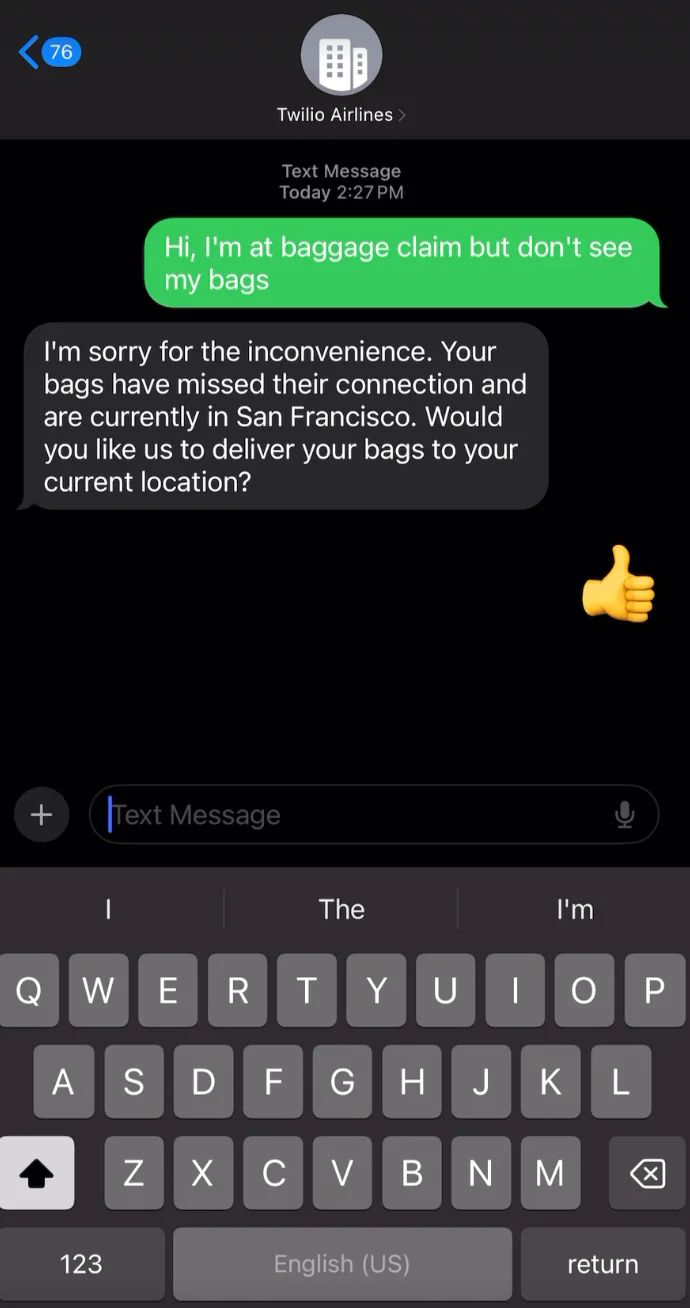

What about in the area of customer lead qualification? Continuing our previous example, RAG can provide highly personalized responses to a prospective customer’s inquiries, streamlining essential pre-sales processes.

The automated assistant provides a personalized response and helps with lead qualification.

The use of GenAI—particularly in contexts like Request for Proposals (RFPs) and sales interactions —can transform the efficiency and effectiveness of work in sales. As companies lean on RAG-enhanced GenAI to deliver tailored and precise information based on the specific needs and questions of potential clients, businesses and customers both win.

We’ve highlighted customer support and pre-sales assistance as two possible use cases, but the possibilities are essentially endless:

- Marketing automation: RAG can dynamically generate personalized marketing content, pulling in contextual information tied to an individual customer’s preferences and behaviors.

- Knowledge management: RAG can enhance internal knowledge bases, providing employees with accurate and contextually relevant information from a vast array of data sources.

- Human resources: RAG can automate the initial stages of the recruitment process by screening resumes, generating personalized interview questions, and providing candidates with relevant information about the company and role.

- Healthcare: RAG can assist healthcare providers during virtual consultations by retrieving patient history and relevant medical literature to provide accurate and informed advice.

These real-world use cases highlight the versatility and impact of RAG across various scenarios, demonstrating its potential to transform customer interactions and business processes across different industries.

Introducing Twilio AI Assistants

RAG enhances LLM applications with real-time data retrieval, delivering accurate and contextually relevant GenAI responses. Modern enterprises across industries—such as ecommerce, healthcare, and customer service—look to RAG to boost the effectiveness of their AI applications. It brings significant business benefits, including improved customer interactions, higher operational efficiency, and better decision-making.

Twilio AI Assistants leverages RAG to provide customer-aware interactions, enhancing support automation. These assistants dynamically fetch relevant data as the customer interaction takes place, offering a seamless and enriched customer experience.

To see Twilio AI Assistants in action, check out this video . Follow Twilio Alpha for updates, and sign up for the waitlist to begin using Twilio AI Assistants soon!

Related Posts

Related Resources

Twilio Docs

From APIs to SDKs to sample apps

API reference documentation, SDKs, helper libraries, quickstarts, and tutorials for your language and platform.

Resource Center

The latest ebooks, industry reports, and webinars

Learn from customer engagement experts to improve your own communication.

Ahoy

Twilio's developer community hub

Best practices, code samples, and inspiration to build communications and digital engagement experiences.