Using Twilio’s Real-Time Transcriptions Service in Node.js

Time to read:

Using Twilio’s Real-Time Transcriptions Service in Node.js

Twilio’s Real-Time Transcription service is now generally available bringing streaming speech recognition capabilities straight into your Twilio Voice applications.

In the past, developers often relied on third-party services coupled with websockets or the TwiML <Gather> or <Record> verbs, waiting until after the caller finished speaking to see results. Now, transcriptions arrive in real time during the call which lets you act on the caller's voice the moment they speak.

This service allows you to customize your transcription service such as using Deepgram or Google as the speech-to-text transcription provider while also remaining HIPAA-eligible and PCI-compliant for sensitive use cases. You can use it to power live captions, trigger automated workflows mid-conversation, feed conversational AI, or run live sentiment analysis with Twilio’s Conversational Intelligence without having to build your own transcription pipeline.

In this tutorial, you’ll learn how to stream transcripts via webhooks using Twilio’s Real-Time Transcriptions service in a Node.js application.

Prerequisites

- A Twilio account

- A Twilio phone number

- The ngrok CLI

Set up a Node.js application

You’ll start setting up your application by initializing a Node.js project and installing the dependencies you’ll need.

Initialize a Node.js project

Open up your terminal, navigate to your preferred directory for Node.js projects, and enter the following commands to create a folder, change into it, and initialize a Node.js project:

Install the required dependencies

Next, run the following command to install the dependencies you’ll need for this project:

- express — This is a framework for Node.js that makes it easier to handle incoming HTTP requests while simplifying routing and server logic

- twilio — The Twilio package will be used to access the Twilio Programmable Voice API to send phone calls, and to construct TwiML that will instruct the call what to do – in this case to start Twilio's transcription service

<Transcription> TwiML Noun

Before building out the application, let's briefly talk about the Transcription TwiML noun. The <Transcription> noun is used within a <Start> block to initiate Twilio’s real-time transcription during an active call. When called, Twilio forks the ongoing call’s audio to a speech-to-text engine and immediately returns text results. This allows you to immediately process speech in your application rather than waiting until the caller has finished speaking.

The statusCallbackUrl attribute specifies where Twilio should send transcription events such as transcription-content or transcription-stopped.

You can use the transcriptionEngine to specify if you’d like to use Google or Deepgram as the provider; this is useful to leverage engine-specific features or optimizations that you can specify in the speechModel attribute.

speechModel is used to specify which model you’d like to use. The telephony speech model (default) is optimized for phone call audio and the long speech model is optimized for long-form audio such as lectures and long conversations.Additionally, you can leverage Twilio’s Conversational Intelligence using the intelligenceService attribute to store and analyze real-time transcriptions. When enabled, it’ll persist the live transcripts and run post-call language operators.

Other attributes include:

- name — To label and reference or stop the transcription

- languageCode — To specify which language the transcription should be performed in (defaults to "en-US" for American English)

- track — To specify which audio track should be transcribed; whether to transcribe the caller audio, callee audio, or both.

Integrate the Real-Time Transcription Service

Open the voice-transcription project directory in your preferred IDE or editor and create a file called index.js within the directory. This file is where you’ll be writing the code that will receive calls.

Then, open the index.js file and add the following code to it:

The code above sets up an Express server which responds to HTTP POST requests to the /voice route. The route will construct TwiML using Twilio's Node Helper Library and start by responding to the caller. It will then start the transcription service with the statusCallbackUrl attribute being the /transcription route (which we will get to later).

The twiml.pause({ length: 3600 }) instruction is used in this example solely to keep the call active while demonstrating the transcription service. In a production setting, you would replace this pause with actual call logic such as <Gather> to collect user input, <Record> to capture audio, or interactive voice response (IVR) menus.

Finally, the TwiML response is formatted as XML and sent back to Twilio. The response for the above route will look like the following:

Next, you'll need to capture the transcription events that will be sent to the /transcription route specified in the statusCallbackUrl. At the end of the file, add the following route to handle the transcription callbacks:

This route receives POST requests from Twilio containing transcription data. The code first checks if the event is a transcription-content event, since we're only interested in the actual transcription content. When Twilio sends transcription content data, the code logs the TranscriptionData to the console and responds with an HTTP 200 OK status.The incoming body of the request will look something like the following:

As you can see the transcription webhook request will contain various data including the event type, call and account SID, where the track is coming from, and transcription data which includes the transcript and its confidence score.

In a production application, you would process this data according to your use case, such as storing it in a database, triggering automated responses, or feeding it to a conversational AI system. For this example, you’ll just log the TranscriptionData to demonstrate how the real-time transcription service works.

Finally, add the last bit of code to the bottom of index.js to spin up your Express server so that it can listen to webhook calls to your server:

Connect your application to Twilio

Before you run and test the transcription service, you'll first need to expose your local Express server to the internet using ngrok. Then, you’ll need to connect your ngrok tunnel to your Twilio number, so that incoming calls to your Twilio number are forwarded to your Node application.

Create an ngrok tunnel

Your Express application will run a local server on your computer, which means it isn’t accessible from the public internet by default. To allow Twilio to send webhook requests to your application, you’ll use ngrok to create a secure tunnel. ngrok will generate a public URL that forwards incoming requests directly to your local Express server.

Open a new terminal tab and run the following command to start a tunnel to port 3000 (the port your Express server is listening on):

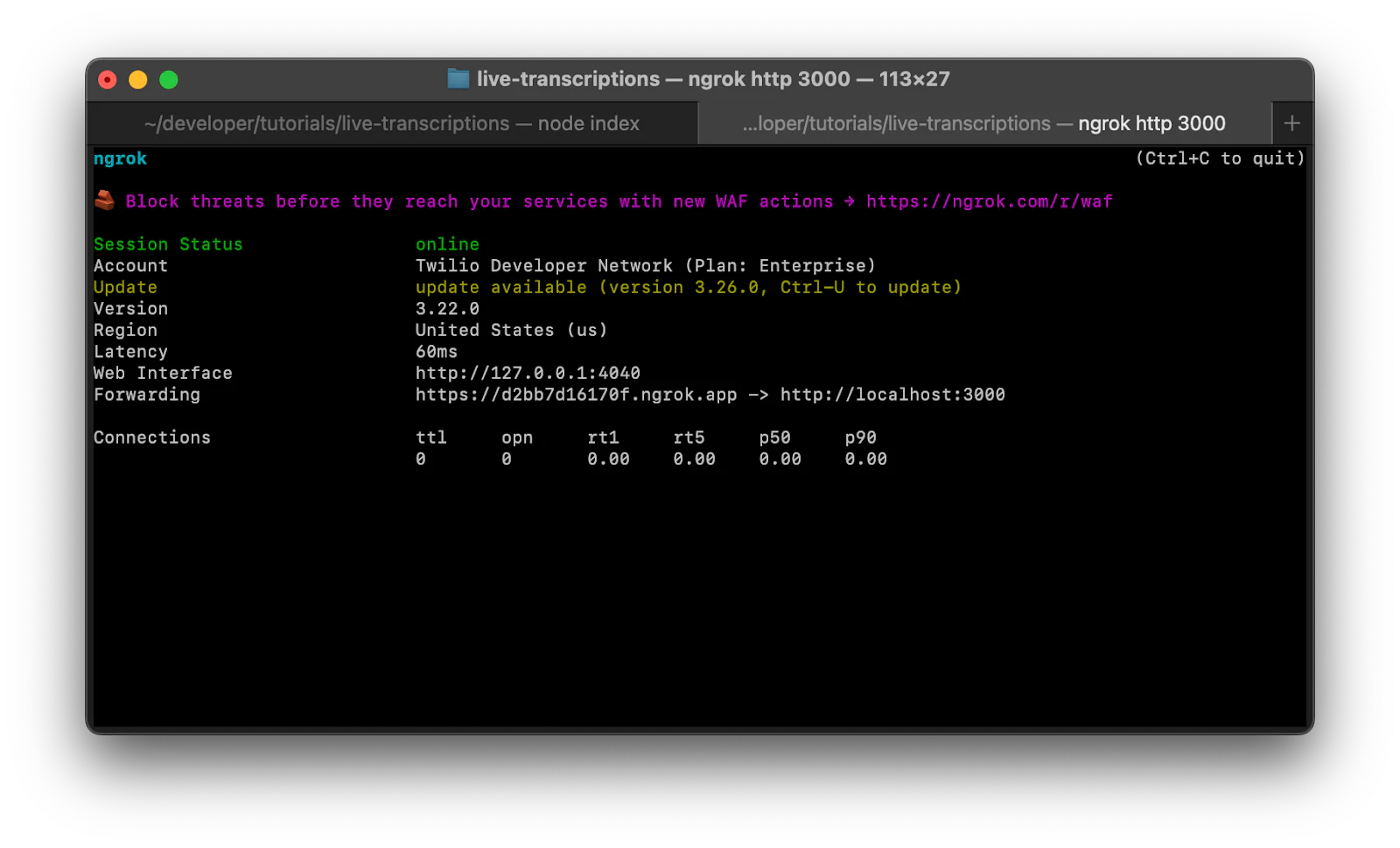

After running the command, your terminal will look like this

The Forwarding URL is what you’ll need to connect your Twilio number to. Copy this URL as you’ll need it for the next section.

Add the forwarding URL to your Twilio number

You'll now connect your application to your Twilio phone number using the forwarding URL provided by ngrok. This URL will route all incoming calls to your Node.js application for processing.

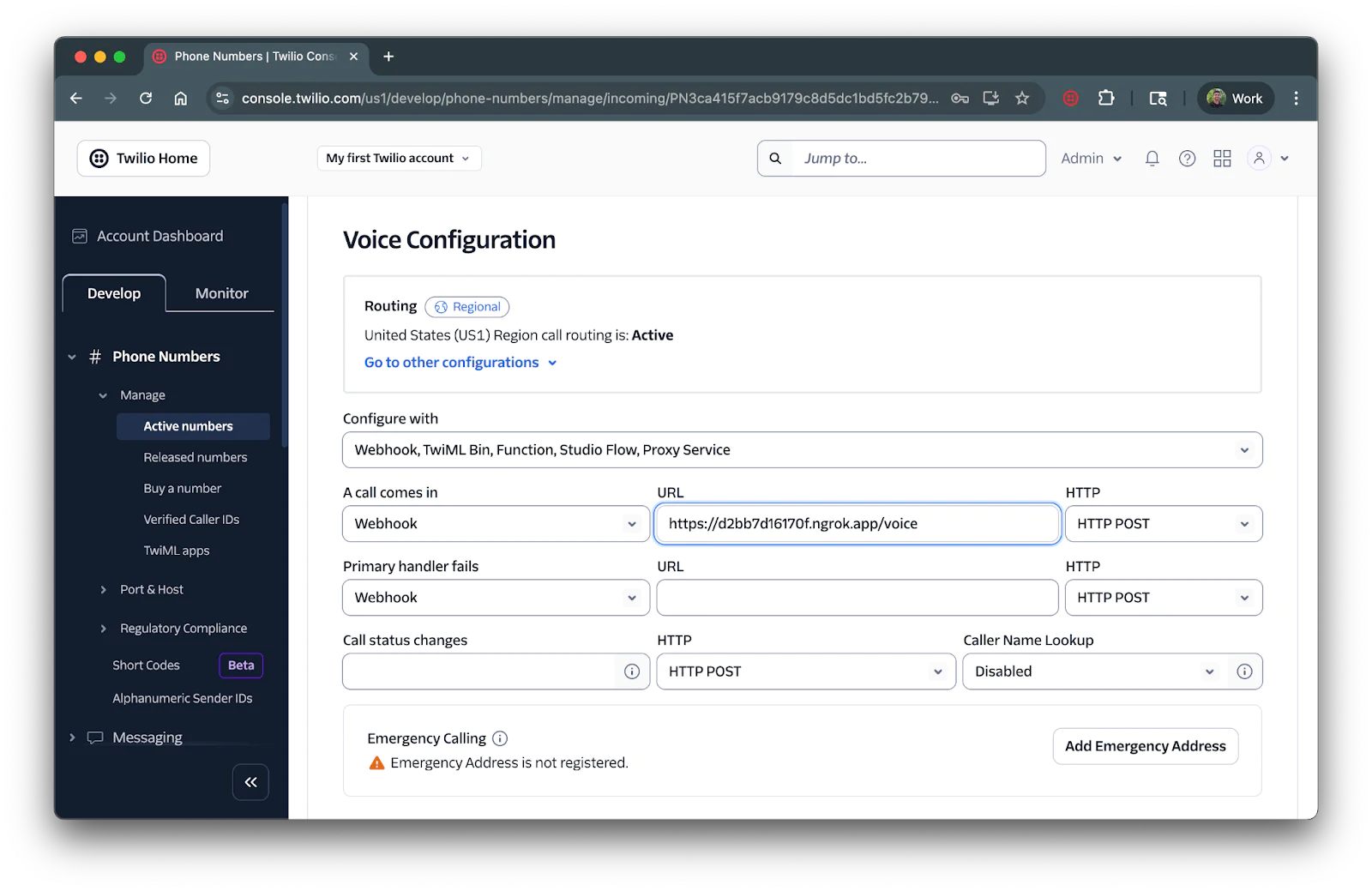

Navigate to the Active Numbers section of your Twilio Console. You can access this by clicking Phone Numbers > Manage > Active numbers from the left navigation panel in your Console.

Click on the Twilio phone number you want to use for your transcription service. In the Voice Configuration section, select Webhook for the " A call comes in" dropdown, then enter your ngrok forwarding URL followed by "/voice" in the textbox (for example: https://abc123.ngrok.io/voice). Finally, ensure the HTTP dropdown is set to HTTP POST.

Ensure you save the configuration by scrolling down and clicking on the Save configuration button.

Test the application

Return to your terminal where ngrok is running and keep that tunnel active. Then, head over to the first terminal tab where you set up your node application (or launch another terminal tab and navigate to your project directory). Start your Express server with the following command:

Your real-time transcription service is now live!

You can test it out by calling your Twilio phone number. After hearing the greeting message, you can speak and the transcription data will appear in your terminal console in real-time. The transcripts will be logged with confidence scores, allowing you to see how accurately the transcription engine processes your voice, such as in the example output below.

That's how to use Twilio’s Real-Time Transcription Service in Node.js

You've just built a voice application using Twilio's Real-Time Transcription service. Your application can now capture and process spoken words as they happen during phone calls. This opens up possibilities for enhancing customer experiences and automating voice interactions.

Consider expanding your application with Twilio’s Conversational Intelligence, using the intelligenceService attribute to add advanced analytics and insights to your transcriptions. When enabled, you can also store transcripts and run post-call language operators.

Dhruv Patel is a Developer on Twilio’s Developer Voices team. You can find Dhruv working in a coffee shop with a glass of cold brew or he can either be reached at dhrpatel [at] twilio.com.

Related Posts

Related Resources

Twilio Docs

From APIs to SDKs to sample apps

API reference documentation, SDKs, helper libraries, quickstarts, and tutorials for your language and platform.

Resource Center

The latest ebooks, industry reports, and webinars

Learn from customer engagement experts to improve your own communication.

Ahoy

Twilio's developer community hub

Best practices, code samples, and inspiration to build communications and digital engagement experiences.