Build an Omni-Channel AI Agent with Twilio Agent Connect and Amazon Bedrock AgentCore

Time to read:

Building an AI agent is relatively straightforward. But building an omnichannel AI Agent and operationalizing it in production – across channels, with persistent memory, low-latency streaming, and session continuity – that’s a different challenge entirely. It requires careful planning around infrastructure, channel management, memory architecture, and real-time performance.

The best way to explore these challenges is by building something real. So we built SkyOwl Airlines – a production-grade reference architecture for a voice and SMS AI customer service agent using Twilio Agent Connect (TAC) and Amazon Bedrock AgentCore. In this post, we’ll walk you through the key architectural concepts, best practices, product integrations, and code as we build it out.

View the full reference architecture on GitHub →

Prerequisites

To follow along with this reference architecture, you’ll need:

- An AWS account with Amazon Bedrock model access (Our build uses Claude Haiku 4.5)

- The AWS CDK CLI and AgentCore CLI (bedrock-agentcore) CLIs installed

- A Twilio account (if you don’t have one yet, sign up here)

- A Twilio phone number with Voice and SMS capabilities

- An API key and secret (you'll create these during setup)

- Python 3.12+, Node.js 22+, and Docker installed

- Basic familiarity with Twilio and AWS

Architecture overview

SkyOwl Airlines is a customer service agent that handles voice calls and SMS messages. A customer can call to check on a delayed flight, change their seat, or look up a reservation – and then follow up over SMS without repeating themselves.

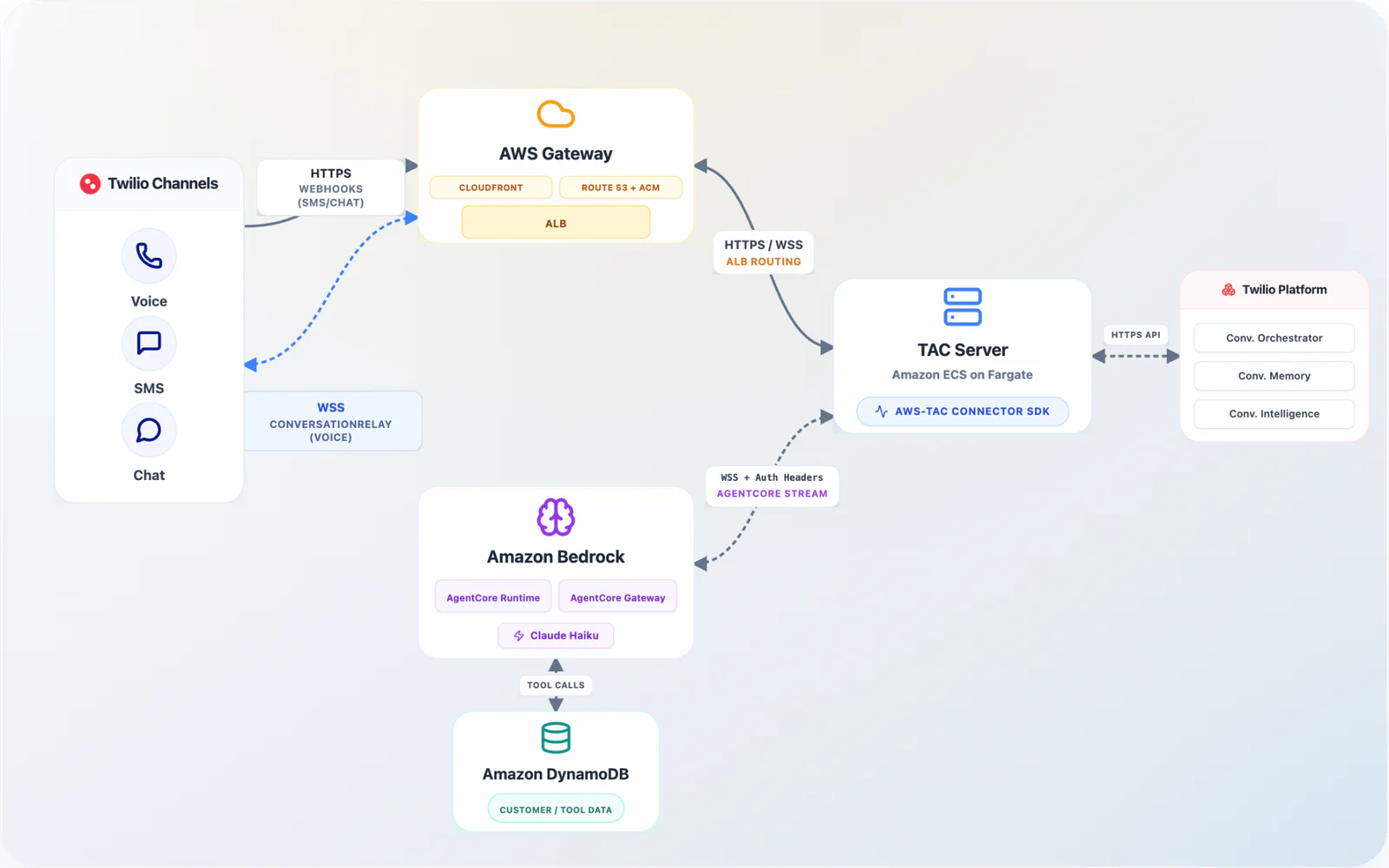

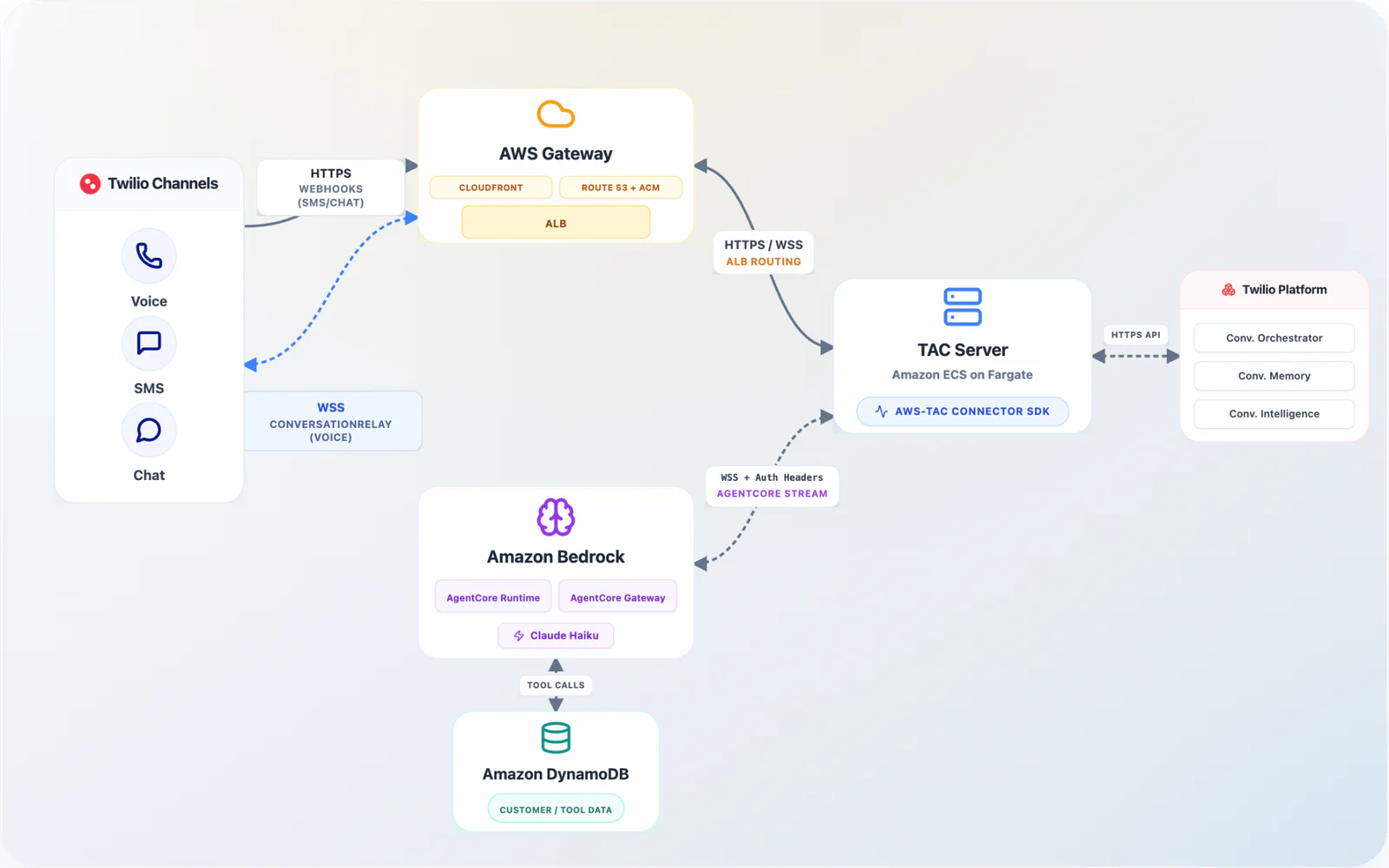

Here’s how the pieces connect:

Let’s walk through the key components:

On the Twilio side:

- Twilio Agent Connect (TAC) is the middleware that connects Twilio’s communication channels to your AI agent. It handles webhook routing, WebSocket management, and memory retrieval – allowing you to focus on your agent, not the plumbing.

- Twilio Conversation Orchestrator manages the conversation lifecycle. It groups conversations per caller and per channel, tracks state, and coordinates between Memory and Intelligence.

- Twilio Conversation Memory stores persistent customer profiles. Every interaction generates observations – structured facts like “Customer prefers window seats” or “Customer expressed frustration about delay” – along with conversation summaries. These persist across all future conversations.

- Twilio Conversation Intelligence analyzes conversations in real-time for sentiment and other signals, and generates post-conversation summaries, without additional code on your part.

- Twilio Conversation Relay bridges voice calls via WebSocket, handling speech-to-text and text-to-speech with configurable providers (Deepgram for STT, Google for TTS in our case).

On the AWS side:

- Amazon Bedrock AgentCore hosts the agent in isolated microVMs with built-in session persistence. Each agent instance runs in its own sandbox, scales independently, and maintains conversation state across turns.

- Strands Agents SDK is the open-source agent framework from AWS. It provides the agent loop, tool calling, and streaming – and deploys natively to Bedrock AgentCore.

- AWS TAC Connector SDK (

tac-aws) is the AWS-specific connector that wires TAC to Bedrock AgentCore, Strands and bedrock. It handles channel routing, WebSocket pooling, memory injection, and agent lifecycle – reducing your integration code to roughly 100 lines.

Key highlights: Token streaming and profile-pinned sessions

Two architectural decisions define SkyOwl Airlines’ production readiness.

Bidirectional WebSocket with token streaming

Voice demands real-time responsiveness. If you buffer the full LLM response before starting text-to-speech, the caller hears dead air – wait too long and the interaction will start to feel unnatural.

Our architecture uses bidirectional streaming via WebSocket from the TAC server to AgentCore Runtime, streaming tokens individually as the model generates them. Using Haiku 4.5 in the us-east-1 region, we saw latency of as fast as 0.45 seconds time-to-first-token in some cases, which means the caller would have heard the agent start speaking almost immediately (and well within common latency targets).

For SMS, where latency is less critical, we use a standard HTTP invocation with a buffered response. The TAC-AWS connector handles this channel routing automatically – voice goes through WebSocket, SMS goes through HTTP.

Here’s how the connector is wired in the TAC server:

That’s the entire integration. The connector creates the voice and SMS channels, registers the TAC callbacks, manages WebSocket pooling, injects memory context, and routes responses to the correct channel.

Profile-pinned Bedrock AgentCore Sessions

Bedrock AgentCore runs each agent in an isolated microVM. We pin sessions to the customer’s profile_id – not the conversation_id:

Why does this matter? When a customer calls back – or switches from SMS to voice – they reconnect to the same agent instance with full conversation history, within Bedrock AgentCore idle timeout window (see AgentCore Runtime lifecycle settings). The Conversation Orchestrator manages the Twilio-side lifecycle grouping conversations by profile (by default, or by address and channel, or by address), while profile-based session pinning manages the agent-side continuity.

Building the core: Agent and TAC Server

The Agent

The SkyOwl agent is built with Strands SDK. It has two entry points (see AgentCore Runtime service contract) – @app.websocket for voice (bidirectional streaming) with Conversation Relay and @app.entrypoint for SMS (request-response) – but it’s the same agent with the same tools and knowledge.

Here’s the voice handler, where tokens stream back as the model generates them:

The agent is channel-aware through its system prompt. Voice responses are concise — one to two sentences, no bullet points. SMS responses include more detail with confirmation codes spelled out. Same agent, same tools, different personality depending on how the customer reaches out.

We chose Anthropic’s Claude Haiku 4.5 deliberately for the fastest thinking latency. Haiku delivered the speed-to-quality ratio that made voice feel most natural to us. Sonnet and Opus are better thinkers, but for this build, responsiveness beat reasoning depth.

The agent has three DynamoDB tools – lookup_reservation, lookup_available_seats, and change_seat – that handle the core airline operations. Tool calls stream back over the WebSocket too, so the TAC server can provide “looking that up for you” feedback while the tool executes.

The TAC server

The server runs the Twilio Agent Connect SDK implementation, which bridges Twilio’s SMS and Voice channels with Bedrock AgentCore – and with the Twilio built AWS connectors, it’s remarkably thin. TACFastAPIServer automatically creates the webhook and WebSocket routes (/twiml, /ws, /webhook, /conversation-relay-callback), while the connector handles memory retrieval, channel routing, and agent lifecycle. Twilio webhook signature validation protects all inbound endpoints.

Memory that spans Conversations and Channels

This is where Twilio’s Conversations products come together in a way that changes the customer experience.

Your Agent starts warm

Every interaction – voice or SMS – generates observations in Twilio Conversation Memory. These are structured facts extracted automatically from the conversation:

- “Customer Jane Smith has confirmation ABC123 on flight OWL-101”

- “Customer prefers window seats”

- “Customer expressed frustration about the 2-hour delay”

- “Customer changed seat from 14A to 14B”

These observations persist in the customer’s memory profile, identified by phone number. Post-conversation summaries generated by Twilio Conversation Intelligence are stored alongside them.

On the next interaction – whether it’s 5 minutes or 5 days later – TAC auto-retrieves this memory and injects it into the agent before the first word is spoken. The agent doesn’t start cold. It starts with context:

Instead of the dreaded:

This is the difference between an AI agent that feels like a tool and one that feels like a service.

Cross-channel continuity

Here’s the scenario that showcases the full power of this architecture:

- Jane sends an SMS: “What’s the status of my flight OWL-101?”

- The agent looks up her reservation, reports the 2-hour delay, and offers to help with rebooking.

- Conversation Memory stores observations: flight details, delay notification, customer sentiment.

- Ten minutes later, Jane calls the same number.

- TAC retrieves Jane’s memory profile – including everything from the SMS conversation.

- The agent picks up where SMS left off: “Hi Jane, I see you texted about the OWL-101 delay. Would you like me to look at rebooking options?”

This works because memory is tied to the customer profile (phone number), not the channel or individual conversation. The Conversation Orchestrator manages the per-channel conversation lifecycle. Conversation Memory provides the persistent thread that ties everything together. And profile-based AgentCore Runtime sessions mean the agent instance itself retains the full context.

Decisions that matter in production

Building SkyOwl Airlines surfaced several architectural decisions that matter for anyone taking AI agents to production. Here are the ones worth sharing.

Why your agent’s system prompt is the safest place for memory

When you retrieve Conversation Memory – observations, summaries, past interactions – you need to inject it somewhere the model can reason about it.

If you choose to inject memory context into the agent’s message history, downstream memory systems will re-extract those injected facts as new observations. You’d get a feedback loop: memory duplicating itself every turn, accumulating noise with each conversation.

Strands Agents lets you modify agent.system_prompt at runtime. We inject memory there – visible to the model for reasoning, invisible to memory extraction pipelines. Clean separation, no pollution.

Why profile_id beats conversation_id for AgentCore sessions

We key AgentCore Runtime sessions on profile_id rather than conversation_id. If you key on conversation_id, a dropped call means a new conversation, a new microVM, and a blank agent history – the customer starts over. Worse, Twilio's Orchestrator groups conversations by participant address AND channel type, so the same customer switching from SMS to Voice gets a different conversation_id and a fresh agent instance with no memory of the prior exchange.

Key on profile_id instead. The same customer reconnects to the same warm microVM with full conversation context, within AgentCore Runtime's idle timeout (default 15 minutes, max 8 hours). The returning caller experience goes from "Can you repeat your confirmation code?" to "Picking up where we left off."

Additionally, profile_id maps naturally to AgentCore Memory's concept of actor_id – if you plan to use AgentCore Memory for hierarchical short-term or long-term storage alongside Twilio Conversation Memory, the session identity is already aligned.

Why a single channel-aware agent, not separate agents

Our architecture means one agent can have two personalities. The system prompt adapts based on the channel context passed in each request:

- Voice: “Keep every response to 1-2 short sentences. No bullet points.”

- SMS: “Respond in 2-3 concise sentences. Include key details inline.”

Same tools, same knowledge, half the maintenance. When you add a new capability – say, rebooking flights – you add it once. When you need to add voice or SMS specific instructions, you know exactly where to put them.

In more complex use cases with multi-agent deployment, Amazon Bedrock AgentCore lets you deploy and run Agent-to-Agent (A2A) servers in the AgentCore Runtime (see A2A servers in AgentCore Runtime). We recommend using a multi-agent setup for separation of concerns for each agent when you have separate needs and development requirements.

Deploy and try it

Ready to try the AWS and TAC reference demo? You can find our repo here. To deploy:

- Clone the repo and copy

.env.exampleto.env. Fill in Twilio credentials and other required variables as shown in.env.examplesection 1. - Run

make setup– this validates prerequisites, creates Twilio resources (Memory Store, Intelligence Configuration, Conversation Configuration), deploys the Strands Agent to AgentCore, provisions DynamoDB tables, and seeds demo data. All automated and with CDK. Watch progress over in CloudFormation. - Run

make run-ngrok– if you have access tongrokin your environment. This starts the TAC server locally and exposes it over ngrok. Configures Twilio Voice URL and Messaging call back with correct ngrok domain. It will also start a local demo dashboard to explore. - [Optionally, requires Docker/Finch] If

ngrokis not available - Runmake run-cloudfront– this deploys the TAC server and dashboard to ECS Fargate behind CloudFront and ALB, and auto-configures all Twilio webhooks with the deployed domain. No manual Twilio Console steps. If you prefer to use your own custom domain, usemake run-custom-domainto deploy both the ECS tasks behind Route 53 and ACM. - Open the demo dashboard using domain output in your previous run commands. Follow the demo scenario steps.

- Send an SMS to Twilio number. Ask about “reservation ABC123 for Jane Smith”. Ask for reservation confirmation. Change seats. Say, you will call back to verify refund eligibility .

- Then, call the same number to continue your conversation on different channels. Watch the agent pick up where you left off.

The included dashboard at /dashboard gives you visibility into DynamoDB data, Conversation Memory profiles, Orchestrator conversations, Intelligence results, and real-time CloudWatch logs from both the TAC server and the agent runtime.

Conclusion

We built SkyOwl Airlines to explore what it takes to bring omnichannel AI agents into production across voice and SMS. The combination of Twilio Agent Connect, Twilio Conversation Memory, and Twilio Conversation Orchestrator with Amazon Bedrock AgentCore and Strands Agents gives you managed infrastructure, persistent cross-channel memory, real-time voice streaming, and isolated agent runtimes — the building blocks for AI agents that customers actually want to talk to.

Explore the reference architecture on GitHub, try the deployment, and extend it with your own tools and channels.

Rachel Baskin is a Principal Project Manager on the Twilio Product team. Her passions are solving customer pain points and integrating product development with analytics for data-driven decision-making. She also loves playing Settlers of Catan and watching Survivor. Connect with Rachel at rbaskin [at] twilio.com.

Akshay Dhawale is a Sr. Solutions Architect at Amazon Web Services, partnering with digital-native companies to build resilient, scalable systems across cloud, data, and AI. He focuses on emerging patterns in generative and agentic AI, real-time voice, and customer data systems—bringing a first-principles lens to complex, evolving architectures. Outside of work, he plays polo every week with the Dallas Polo Club, and has played in three of the top five polo-playing countries. You can find Akshay on LinkedIn.

Related Posts

Related Resources

Twilio Docs

From APIs to SDKs to sample apps

API reference documentation, SDKs, helper libraries, quickstarts, and tutorials for your language and platform.

Resource Center

The latest ebooks, industry reports, and webinars

Learn from customer engagement experts to improve your own communication.

Ahoy

Twilio's developer community hub

Best practices, code samples, and inspiration to build communications and digital engagement experiences.